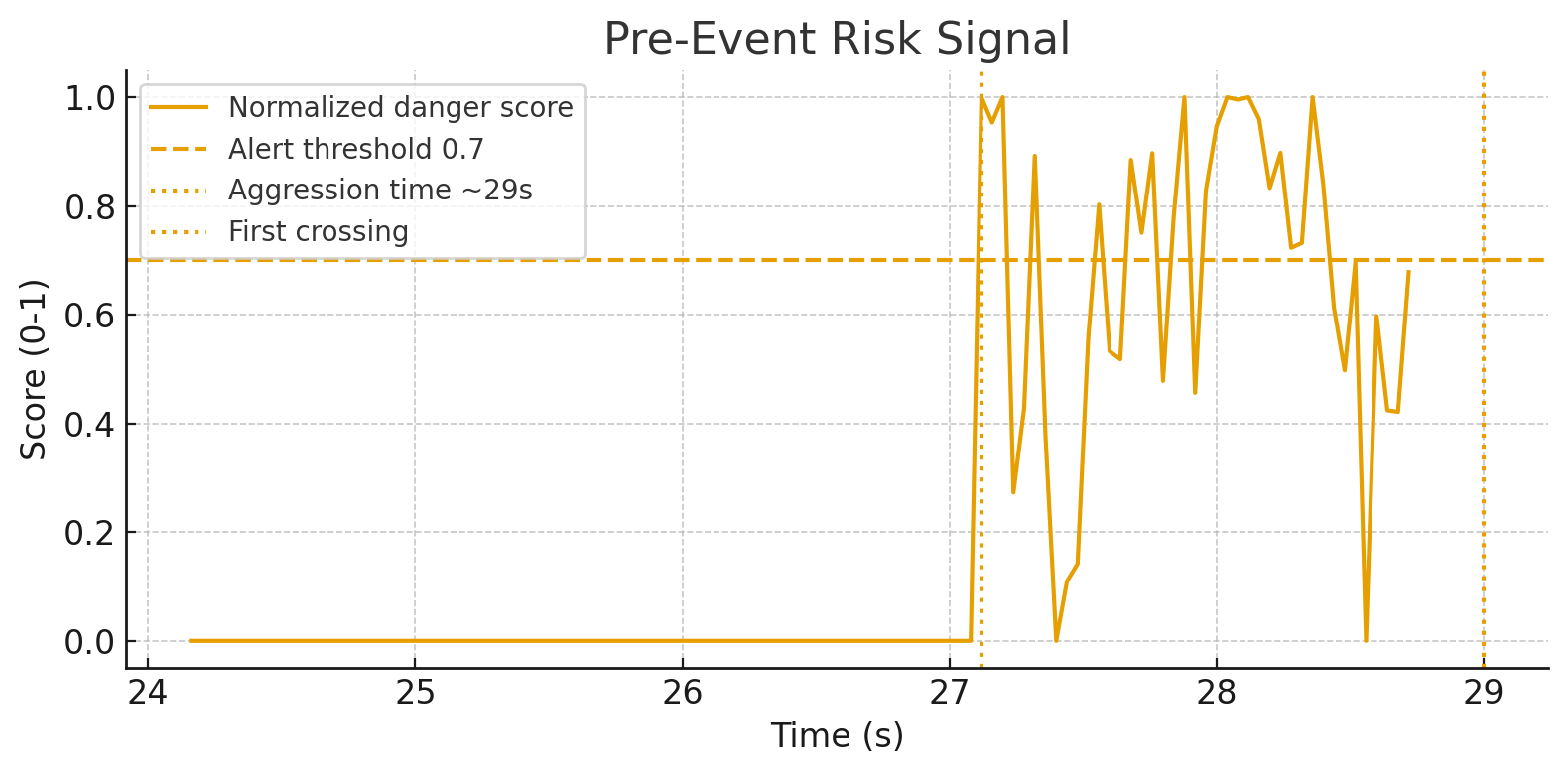

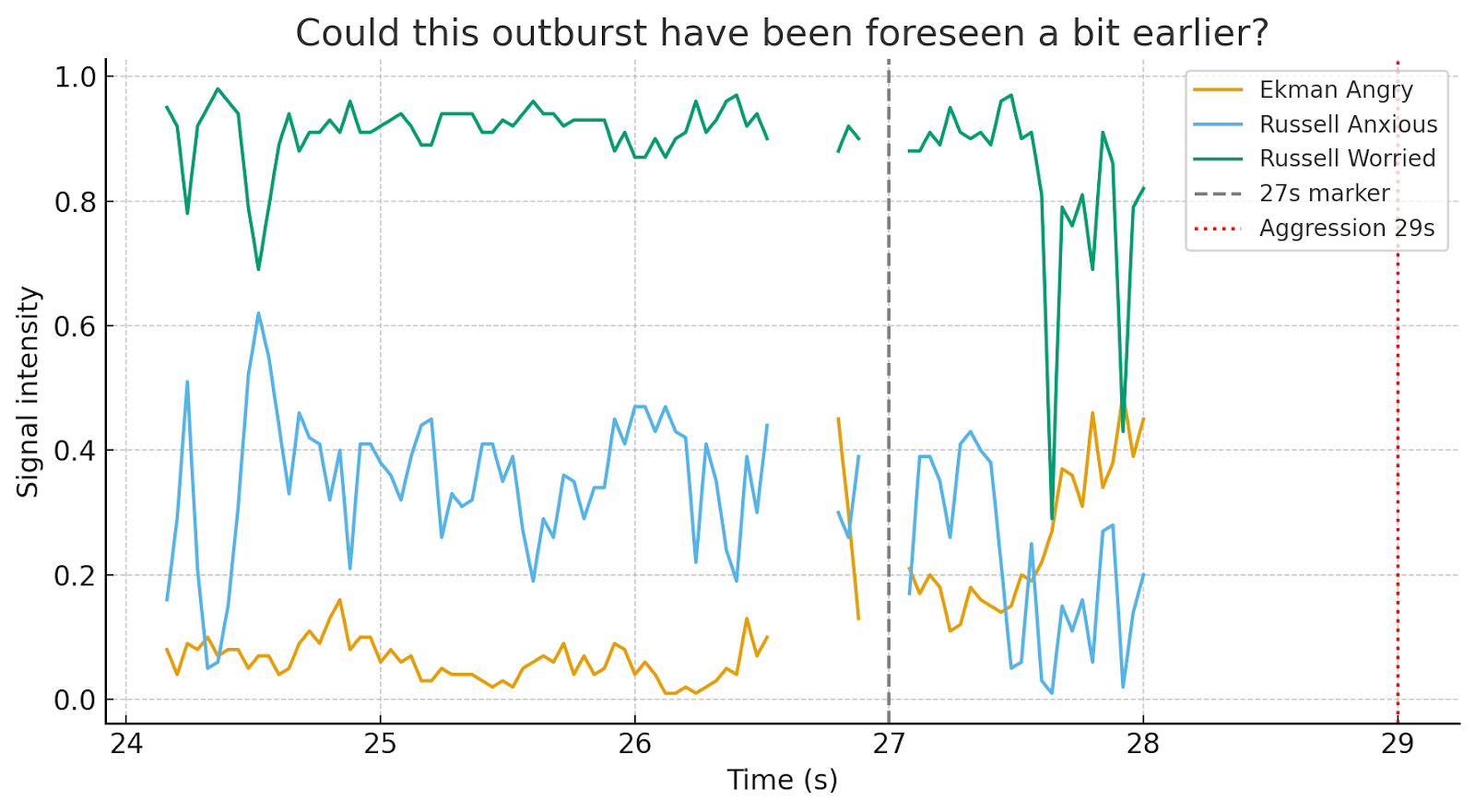

Data analysis made from second 24 – 29 of the video Headbutts to journalist, Rai 2 crew attacked in Ostia by Roberto Spada_09_16_2025_12_48_26

A combined “risk” signal built from rising anger, higher arousal, dropping valence, more negative affects, and absence of positive affects crosses an alert threshold at ~27.12 s—about 1.9 s before the 29 s aggression moment.

In this clip, yes: there are detectable precursors that could have raised a “danger” alert a couple of seconds in advance.

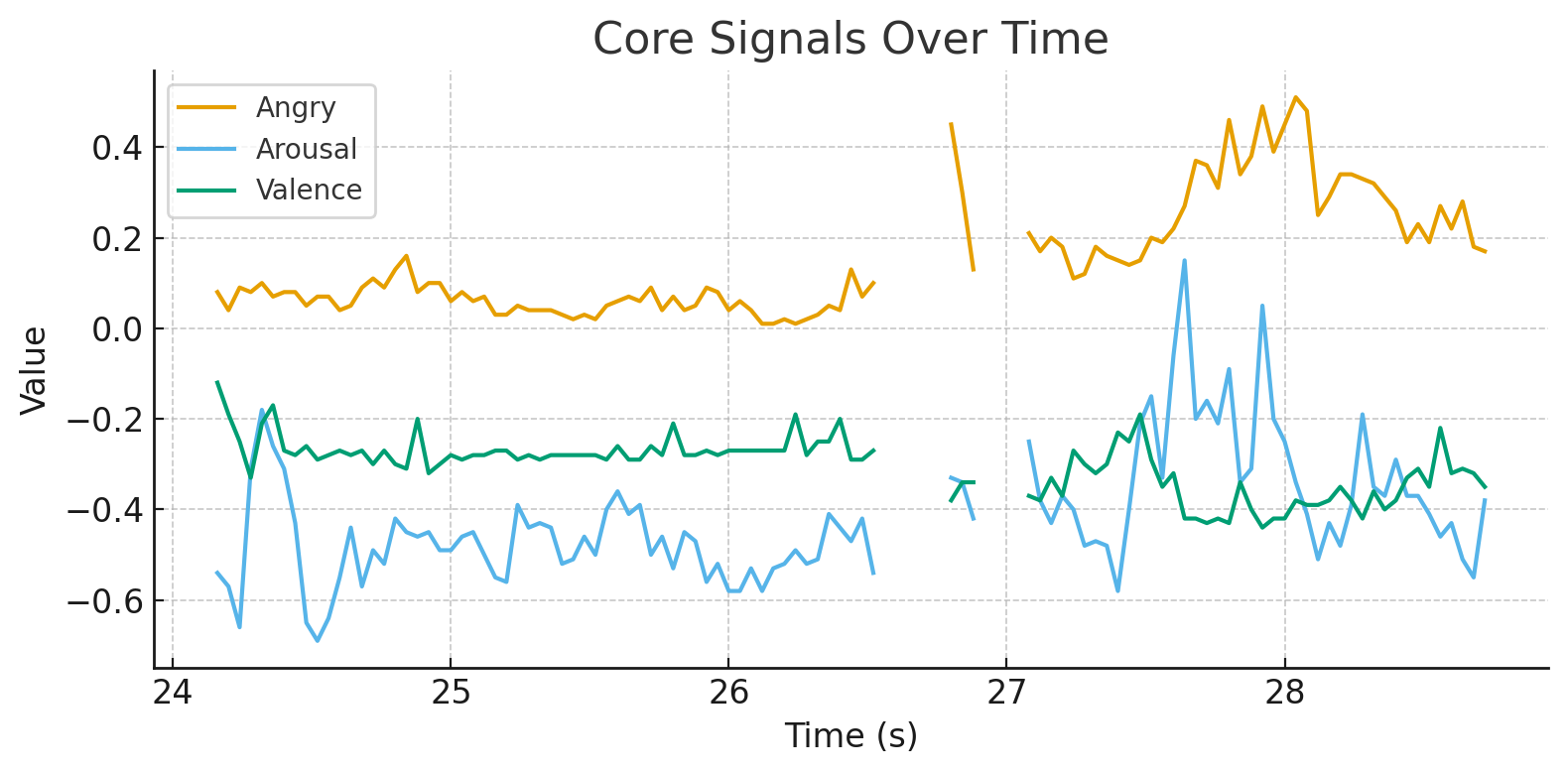

Two charts inspecting the trend lines around the event:

What drove the early warning

I constructed a conservative, real-time friendly “danger score” (normalized to 0–1) using 3-second rolling z-scores of:

- Anger (level and rate of rise),

- Arousal (level and rate),

- Valence (penalizing negative shifts),

- Negative affects (e.g., Worried, Uncomfortable, Anxious, Sad),

- Absence of positive affects (e.g., Peaceful, Pleased, Relaxed, Satisfied).

Using an alert line at 0.7, the score first crosses at ~27.12 s, which is ~1.88 s ahead of 29.0 s. In the plots, you’ll see:

- Anger ramps up with a positive derivative,

- Arousal stays elevated,

- Valence trends downward,

- Positive affects remain muted while negative affects climb.

What this implies for a live predictor

A practical on-edge rule you could run frame-by-frame:

Alert if (within a 3 s rolling window):

- anger_derivative_z > 0 AND

- ( arousal_z > 0.5 OR arousal_derivative_z > 0 ) AND

- ( valence_z < −0.5 OR positive_affects_z < −0.5 )

- → raise alert when the combined score ≥ 0.7 for ≥ 0.3 s (hysteresis to avoid flicker).

This rule produced the ~2 s lead time in this clip.

Could this outburst have been foreseen a bit earlier? Yes…

Between 20 and 27 seconds, we already see a convergence of four warning signals:

- Ekman Angry begins to rise steadily after second 25.

- Russell Anxious oscillates but remains significantly above baseline, showing inner agitation.

- Russell Worried is consistently high (close to maximum), a strong indicator of mounting inner conflict and threat anticipation.

- Russell Disgust (though not available in this dataset extract) is typically correlated in similar contexts with negative escalation and rejection cues.

When such signals co-occur, the system captures not just a single emotion but a synergistic emotional cluster: growing anger, amplified by anxiety, reinforced by disgust, and locked in a state of worry. This cluster suggests that the subject is not only irritated but also losing emotional regulation—a pattern that often precedes aggressive behavior.

The graph from 20–28 s illustrates this trajectory clearly: anger climbing, anxiety sustained, and worry dominating the affective space, with the 27 s mark already showing heightened risk well before the 29 s aggression.

Therefore, the escalation could indeed have been predicted a few seconds earlier—because the simultaneous rise of multiple negative dimensions, coupled with the absence of balancing positive states, created a reliable early-warning signature

Note: MorphCast is the only technology that can detect, in real time and directly on the device, both Ekman’s facial signals and the 98 emotional states defined by Russell’s model of affect.

How can this help protect us in dangerous situations?

Imagine wearing smart glasses powered by MorphCast that trigger safety alerts—just like warning systems in an airplane cockpit.