Last Update May 12, 2026

Dear customer,

We want to provide you with transparency and clarity regarding our emotional artificial intelligence system. This document details how it works, the methods for extracting data, information about the sources of data, and methods used for evaluating the system. It also includes known limitations or biases, as well as an assessment of potential risks and benefits of using the emotional AI system. To align with the AI Act, we are implementing ongoing compliance activities. These include regular risk assessments, documentation outlining compliance measures, and a process for addressing potential biases within our system. We provide guidelines for safe and effective use, aiming to ensure responsible deployment of our AI system.

The technology developed and distributed by Morphcast Inc., called “MorphCast® EMOTION AI HTML5 SDK” and/or the other available products, services and features that integrate it (e.g. MorphCast Web Apps or MorphCast Emotion AI Interactive Media Platform) is an artificial intelligence software capable of processing images from a video stream that allows you to establish, with varying degrees of probability and accuracy as you can see below in this document, the emotional state of the framed subject and some other apparent characteristics such as, for example, the cut or color of the hair, the apparent age and gender, facial emotion expressions, in any case without any identification of the subject (hereinafter, the “Software“). For a complete list of features extracted from the Software, please refer below in this document.

This technology, which Morphcast Inc. makes available to its users (qualified as “Registered Users”, pursuant to the applicable “Terms of Use”, and hereinafter referred to as “Customers“) has been specifically designed and developed so as not to identify the framed subjects. These subjects are the end users of the Software chosen by the Customer (hereinafter, the “End Users“), with whom Morphcast Inc. has no relationship whatsoever.

The Software can be used autonomously by the Customer or be activated, by the Customer’s choice, in the context of use of other services provided by Morphcast Inc., whose terms of use and privacy policies are available at this link: mission page. As regards the registration data of the Customers, the relative Privacy Policy will also apply, available at this link: Emotion AI – Privacy Policy.

Index

- How it works

- Respecting Human Rights in the Age of AI

- User Profiling

- Training Data

- Measures to Ensure Security and Reliability

- Principles of Cybersecurity and Data Protection

- Damage or Discrimination Complaint System

- Transparency on the degree of probability and Accuracy

- Capabilities – Type of data extracted

- Bibliography

- Contacts

How it works – Operation

The Software works as a JavaScript library embedded in a web page usually belonging to the Customers’ domain, running in the browser of the End User device, which, as indicated above, has a relationship exclusively with the Customer (and not with Morphcast Inc.).

The Software does not process biometric data that allow the univocal identification of a subject, but only an instant (volatile) processing of personal data during the processing of images (frames), read from the video stream (for example of a camera, which is used only as a sensor and not as an image and/or video recorder) provided by the End User’s browser. Morphcast Inc. does not carry out any processing or control over such personal data as they are processed through the Software automatically and directly on the End User’s device browser or app.

Accordingly, the use of the Software is subject to the following terms of use, both of which are beyond Morphcast Inc.’s control: (1) the terms of use of the End User’s browser in which the Software is incorporated and (2) any software or third party API required to allow the web page to function.

Notwithstanding the foregoing, as the supplier of the Software, Morphcast Inc. (a) warrants that it has adopted all appropriate security measures in the design of the Software also with regard to the processing of personal data, taking into account a privacy-by-design approach and ensuring adequate security of the software itself and (b) makes itself available to collaborate with the Customer (who, in case it uses the extracted data autonomously, assumes the title of Data Controller) for any processing of personal data relating to the End User, showing and explaining all the functions of the Software, in order to enable it to carry out a precise risk analysis with respect to the fundamental rights and freedoms of the persons concerned (including any impact assessments, where necessary and/or appropriate, in the opinion of the Data Controller). In fact, it will be the Data Controller who will have to establish the purposes, methods and legal basis of the processing, the time and place (physical or virtual) for the storage of personal data relating to End Users, also providing them with specific online information. with the applicable legislation for the protection of personal data.

According to the provisions of the Terms of Use of the Software (available here for all available applications: Terms of Use), the same is aimed solely and exclusively at professional users, with the express exclusion of consumers and, therefore, of the use of the Software for domestic / personal purposes. In any case, whatever the type of processing carried out by the Customer, as Data Controller, the consequences deriving from the application of the applicable legislation, including that for the protection of personal data, are exclusively the responsibility of the Data Controller.

It is also specified that the Software operates integrated in a private corporate hardware / software context of the Customer’s End User (on end-point resources, including web pages belonging to the Customer’s domain and therefore under its control) equipped with security necessary to avoid the compromise of the data affected by the processing by the Software. In line with the foregoing, the consequent responsibilities relating to the processing of personal data of End Users by the Customers fall exclusively on the Customer, which therefore undertakes, in accordance with the provisions of the “Indemnification” section of the terms of use of the Software, to fully indemnify Morphcast Inc. from any prejudicial consequence that may arise in this regard.

As per the emotional data made available to the Customers by the Software through the “Data Storage” module and the relative dashboard and also if the Customer uses the MorphCast Emotion AI Interactive Media Platform service by activating the “Statistic Dashboard” function, it is specified that such statistical data cannot be considered private since they are not associated with natural persons. Therefore, these data are to be considered not subject to privacy laws.

Important: To ensure you understand how your data and the data of your users are used, please review the privacy policy directly associated with each product. You can find summaries of all policies on this page.

Respecting Human Rights in the Age of AI

At MorphCast, we believe in the transformative power of AI to connect people and enhance communication. However, we also recognize the ethical responsibility that comes with harnessing such powerful technology. We are committed to upholding human rights principles in all aspects of our development and operation, ensuring that AI enhances human flourishing while respecting individual dignity and fundamental freedoms.

Our Guiding Principles:

- Transparency and Explainability: We strive for transparency in our data collection, model development, and decision-making processes. We provide users with clear explanations of how MorphCast works and how their data is used.

- Non-discrimination and Fairness: We design and train our systems to be fair and unbiased, avoiding any potential for discrimination based on protected characteristics. We actively monitor for and address any potential biases that may emerge.

- Privacy and Data Protection: We respect user privacy by adhering to relevant data protection regulations and collecting only the minimum data necessary for service operation. We empower users with control over their data and prioritize its security and confidentiality.

- Freedom of Expression and Opinion: We recognize the importance of freedom of expression and opinion. We do not censor user content and only restrict communication that violates our terms of service or promotes illegal activities.

- Accountability and Oversight: We hold ourselves accountable for our actions and are open to feedback and scrutiny. We engage with stakeholders from diverse backgrounds to ensure our practices are ethical and responsible.

Putting Principles into Practice:

- Bias Detection and Mitigation: We employ diverse datasets and utilize fairness metrics to identify and mitigate potential biases in our models. We regularly review and update our systems to ensure consistent fairness.

- User Control and Consent: We provide users with clear options to control how their data is collected, used, and shared. We obtain explicit consent before processing any personal information.

- Transparent Privacy Policy: Our privacy policy is easily accessible and clearly explains our data practices. We avoid complex legal jargon and ensure the information is understandable to all users.

- Human Review and Oversight: We implement human review processes for sensitive content and algorithmic decisions, ensuring a balance between AI automation and human judgment.

- Collaboration and Stakeholder Engagement: We actively engage with civil society organizations, legal experts, and ethicists to learn from diverse perspectives and ensure our practices align with evolving human rights standards.

By upholding these principles, MorphCast aims to be a leader in responsible AI development, fostering an environment where technology serves humanity and empowers individuals.

It is important to note that this is not a comprehensive list and the specific implementation of these principles will evolve as technology and regulations develop. We remain committed to ongoing learning and improvement, ensuring that MorphCast remains a force for good in the world.

User Profiling

At MorphCast, we recognize the potential of user profiling to personalize experiences and tailor our platform to individual needs. However, we also understand the ethical concerns surrounding user profiling and are committed to utilizing this technology responsibly and transparently.

Our Approach to User Profiling:

- Limited and Necessary Data: We collect only the data essential for understanding user preferences and customizing their experience. We avoid unnecessary data collection and prioritize user privacy.

- Transparency and Choice: We inform users about what data we collect and how it is used for profiling purposes. We empower users with clear options to manage their profile data and opt out of profiling altogether.

- Aggregation and Anonymization: We prioritize data minimization and anonymization wherever possible. We aggregate user data for analysis and avoid personal identification whenever feasible.

- Fairness and Non-discrimination: We design our profiling algorithms to be fair and unbiased, ensuring they do not disadvantage or discriminate against any particular group of users.

- Human Oversight and Control: We implement human oversight mechanisms to review and validate profiling results, ensuring responsible decision-making and preventing algorithmic bias.

Benefits of User Profiling:

- Personalized Recommendations: We leverage profiling to suggest relevant content, features, and connections to individual users, enhancing their experience and engagement.

- Improved Content Discovery: By understanding user preferences, we can tailor content displays and search results to individual interests, promoting relevant and valuable information.

- Enhanced Accessibility: Profiling can inform accessibility features and personalize interfaces to better meet the needs of diverse users.

Addressing Concerns:

- Data Security and Privacy: We prioritize data security with robust measures like encryption and access controls. We comply with relevant data protection regulations and provide users with control over their data.

- Algorithmic Bias: We actively test and monitor our profiling algorithms for potential biases and take corrective actions to ensure fairness and inclusivity.

- Transparency and Control: We offer users clear explanations of how their data is used for profiling and provide them with options to manage their profiles and opt out.

By adhering to these principles, MorphCast strives to utilize user profiling responsibly and ethically. We believe that personalized experiences can enhance user engagement without compromising individual privacy or creating discriminatory outcomes.

It is important to note that this is an evolving area and we are committed to continuously learning and adapting our approach as technology and regulations change. We welcome feedback and discussion on user profiling to ensure we remain responsible and transparent in our practices.

Training Data

Our neural networks are developed with custom datasets that encompass a diverse range of faces, covering various ethnicities, ages, and genders. All the models are trained on diverse facial static images, including in-the-wild conditions rather than only controlled lab imagery. The training data includes variability in facial appearance, expression, lighting, image quality, and face orientation. Due to confidentiality, details about the dataset remain proprietary. However, it’s important to acknowledge that like any AI system, ours is not immune to biases related to race, gender, and age. Nevertheless, we have evidence to support that such biases do not significantly impact our analysis results. Specifically, MorphCast SDK underwent rigorous evaluation on two benchmark tests by an independent research center, as documented in their publication (https://psyarxiv.com/kzhds/). The test was made on testbed BU-4DFE among different ages, genders and the following ethnicities: Asian, Black, Hispanic / Latino, African American and White population. This evaluation confirmed the SDK’s accuracy across different ages, genders, and ethnic groups.

We encourage users to be mindful of potential biases on a case-by-case basis, aiming to mitigate or eliminate their effects whenever possible. This approach ensures the most effective use of our technology, tailored to the specific objectives of each analysis.

Measures to Ensure Security and Reliability

At MorphCast, we recognize the importance of trust in AI. As a U.S.-based company operating globally, we leverage AI to foster meaningful human connections while maintaining a strong commitment to security and reliability.

In alignment with the AI Act’s regulations on AI safety and accountability, we proactively address key risks associated with our system to ensure compliance for users in the European Union (EU) and beyond.

Data Security & Privacy

- Accuracy & Bias Mitigation: We strive for fair and unbiased AI models by using diverse training datasets, implementing bias detection mechanisms, and conducting regular fairness audits.

- Data Security: We enforce strict encryption protocols, access controls, and continuous security testing to safeguard user data. Our zero-trust approach, role-based access management, and cybersecurity best practices minimize risks.

- Compliance with Global Regulations: MorphCast is committed to respecting user privacy and adhering to data protection laws. We ensure:

- Clear and informed user consent

- Explicit data collection purposes

- Data minimization and user control over personal information

System Security & Reliability

- Fairness & Explainability: MorphCast uses counterfactual explanations, interpretability techniques, and fairness metrics to enhance transparency and prevent discriminatory outcomes.

- Vulnerability Management: We conduct routine vulnerability scans, implement security patches, and follow secure development best practices, including penetration testing and intrusion detection systems.

- Operational Reliability: Continuous performance monitoring, stress testing, and automated error detection ensure stable, predictable, and error-resilient AI performance.

Ethical & Responsible AI Use

- Fairness & Inclusivity: We actively promote diverse and equitable AI applications through stakeholder engagement, bias reduction strategies, and transparent handling of sensitive data.

- Preventing Misuse & Manipulation: MorphCast proactively detects and mitigates AI misuse, provides user education on responsible AI use, and ensures strict compliance with AI Act obligations for EU users.

- Ethical AI Implementation: We assess societal impact, workforce implications, and AI transparency, ensuring responsible AI adoption while supporting regulatory compliance and innovation.

Performance Monitoring & Continuous Improvement

We continuously evaluate MorphCast’s accuracy, security, and compliance using real-time performance dashboards and automated monitoring tools. Our update process includes:

- Retraining AI models with diverse, high-quality data

- Implementing advanced security measures

- Adjusting algorithmic parameters for enhanced performance

- Maintaining a transparent changelog of updates

This is just an overview of our dedication to AI security and reliability. As regulations, including the AI Act, evolve, MorphCast remains committed to transparency, legal compliance, and responsible AI deployment—ensuring AI enhances human interaction while upholding the highest ethical standards.

Principles of Cybersecurity and Data Protection

At MorphCast, we operate with the understanding that the power of AI comes with a significant responsibility – protecting user data and ensuring the security of our platform. We are committed to upholding the highest standards of cybersecurity and data protection, guided by the following key principles:

1. Security by Design: Security is embedded into every aspect of MorphCast, from system architecture to development practices. We employ industry-leading security measures like encryption, access controls, and regular security assessments to ensure data confidentiality, integrity, and availability.

2. Privacy-First Approach: We respect user privacy by collecting only the data essential for service operation and obtaining explicit consent before processing it. We adhere to data minimization principles, limit data retention periods, and empower users with control over their data through transparent privacy policies and clear opt-out mechanisms.

3. Transparency and Accountability: We believe in being transparent about how we collect, use, and protect user data. Our privacy policies are easily accessible, and we provide clear explanations regarding data processing purposes and potential risks. We are accountable for our actions and regularly review our practices to ensure compliance with relevant regulations and ethical standards.

4. User Education and Empowerment: We believe an informed user base is key to robust security and privacy. We provide users with accessible resources and educational materials to understand how MorphCast handles data and how to use it responsibly. We encourage users to report any suspicious activity and actively participate in our security improvement efforts.

5. Continuous Improvement: We understand that the cybersecurity and data protection landscape is constantly evolving. We are committed to staying ahead of the curve by conducting regular risk assessments, incorporating new security best practices, and actively engaging with relevant experts and stakeholders.

These principles are the foundation of our commitment to building a trustworthy and secure platform for AI-powered engagement. We strive to earn and maintain user trust by continuously demonstrating our dedication to responsible data practices and robust cybersecurity measures.

By upholding these principles, MorphCast aims to be a leader in responsible AI development and foster a trusted environment for human connection and engagement.

Damage or Discrimination Complaint System

At MorphCast, we strive to utilize AI responsibly and create a platform that fosters positive and inclusive interactions. However, we recognize that any use of technology can have unintended consequences. To ensure fairness and address potential concerns, we have established a clear and accessible Damage or Discrimination Complaint System.

Our Commitment:

- We are committed to investigating all claims of damage or discrimination arising from the use of MorphCast promptly and comprehensively.

- We believe in transparency and will communicate openly with complainants throughout the investigation process.

- We will take appropriate action to address any identified issues, implementing remedial measures to prevent similar situations in the future.

What You Can Report:

You can submit a complaint if you believe you have suffered:

- Discrimination: This includes any unfair treatment based on factors such as race, ethnicity, religion, gender, sexual orientation, disability, or age.

- Emotional harm: This could involve distress, anxiety, or other negative emotions caused by the use of MorphCast.

- Financial damage: This covers any direct financial losses resulting from the misuse of MorphCast.

How to Submit a Complaint:

- Online Form: Submit your complaint directly through our dedicated online form accessible on our website.

- Email: You can send your complaint to our dedicated email address: damages@morphcast.com

What We Need from You:

To expedite the investigation process, please provide the following information in your complaint:

- A clear description of the incident.

- The date and time the incident occurred.

- Any relevant screenshots or recordings.

- Your contact information.

What Happens Next:

- Upon receiving your complaint, we will acknowledge it within 24 hours.

- A dedicated team will review your complaint and may request additional information if needed.

- We will keep you informed of the progress of the investigation and communicate our findings and any remedial actions within a reasonable timeframe.

Additional Resources:

- You can access our Terms of Service and Privacy Policy on our website for further information regarding our commitments.

- We encourage you to contact us if you have any questions or concerns about our Damage or Discrimination Complaint System.

Transparency on the degree of probability and accuracy

Emotion AI facial recognition is a form of AI that is designed to analyze facial expressions and identify emotions such as happiness, sadness, anger, and surprise. The technology used by MorphCast is a combination of computer vision and convolutional deep neural network algorithms to analyze images or videos of people’s faces, and identify patterns that correspond to specific emotions.

MorphCast Engine has been tested by AI specialists using public benchmark testbeds and independent academic evaluations commonly adopted by the scientific community to assess facial analysis, emotion recognition, age, gender, pose, and arousal/valence estimation. These include BU-4DFE, UT-Dallas, CK+ / Extended Cohn-Kanade, LFW, and UTKFaces.

MorphCast Engine is designed to be transversal and general-purpose, but the best results for a specific application are usually achieved by tuning configuration, thresholds, smoothing, aggregation windows, and post-processing logic to each scenario.

MorphCast outputs should be used as probabilistic indicators and decision-support signals, not as autonomous decision-making tools. The Engine should not be used in use cases where the system independently makes decisions with legal, medical, financial, employment, educational, discriminatory, or similarly significant effects on individuals. In such contexts, measurements should only support human review, aggregate analytics, assistive workflows, or user-experience optimization, with proper oversight and safeguards.

The accuracy of emotion AI facial recognition technology can be affected by a number of factors, including:

- Image or video quality: The technology is more likely to produce accurate results when the images or videos being analyzed are of high quality, with clear and well-lit faces. Factors such as very low resolution, poor lighting, or camera angle can make it more difficult for the algorithm to accurately identify emotions.

- Algorithm: Different algorithms have different levels of accuracy. MorphCast algorithms use deep learning techniques like convolutional neural networks (CNNs) and are trained on large datasets, these are considered to be more accurate than the ones that use traditional machine learning techniques.

- Training data: The accuracy of emotion AI facial recognition technology can also be affected by the quality and diversity of the training data that the algorithm has been exposed to. MorphCast algorithms are trained on a diverse dataset of images and videos from people of different ages, genders, and ethnicities. They are likely to be more accurate than those that are trained on a more limited dataset.

- lighting conditions: In addition to our commitment to diversity in training data, it’s crucial to address the role of environmental factors—particularly lighting conditions—on the accuracy of our AI technology. The interaction between light and skin, known as light refraction, varies significantly across different skin tones. This variation is not a matter of better or worse performance but depends on the context: in low-light conditions, the accuracy of facial recognition on lighter skin tones might be higher, whereas in brightly lit environments, darker skin tones may be detected with greater precision. These differences, attributable to the physics of light interaction, can have a more substantial impact on recognition accuracy than the minimal biases we’ve diligently minimized through training our AI with a vast and diverse dataset.

It is also important to note that emotion recognition technology is still in its early stages, and in MorphCast there is continuously ongoing research to improve its accuracy. Furthermore, there is a degree of bias in these technologies, particularly with regards to race and gender. As a result, it is important to be aware of these biases and take steps to mitigate them. This is the commitment that MorphCast has given itself since its inception.

Precisely with regard to the Bias we want to cite a recent research on it:

Abstract: Emotional expressions are a language of social interaction. Guided by recent advances in the study of expression and intersectionality, the present investigation examined how gender, ethnicity, and social class influence the signaling and recognition of 34 states in dynamic full-body expressive behavior. One hundred fifty-five Asian, Latinx, and European Americans expressed 34 emotional states with their full bodies. We then gathered 22,174 individual ratings of these expressions. In keeping with recent studies, people can recognize up to 29 full-body multimodal expressions of emotion. Neither gender nor ethnicity influenced the signaling or recognition of emotion, contrary to hypothesis. Social class, however, did have an influence: in keeping with past studies, lower class individuals proved to be more reliable signalers of emotion, and more reliable judges of full body expressions of emotion. Discussion focused on intersectionality and emotion. (PsycInfo Database Record (c) 2022 APA, all rights reserved).

Additionally, many studies have shown that emotion recognition technology is more accurate for certain emotions (e.g. happiness, anger) than others (e.g. sadness, surprise), and there is a degree of variability across different algorithms, which makes it challenging to give a general number for the accuracy of these technologies. Despite this, the current emotion prediction accuracy can be considered between 70% and 80% for almost all algorithms on the market.

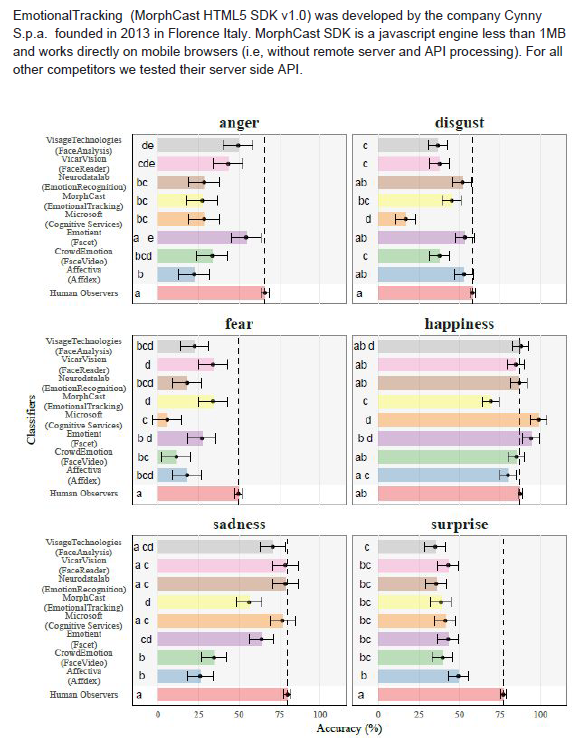

In this regard, MorphCast has been evaluated in its accuracy by a university study that compares the accuracy of different software, detected on the six basic emotions of Paul Ekman, with the perception of the human being. These are the results of the accuracy study:

Damien Dupré, Eva G. Krumhuber, Dennis Küster, & Gary McKeown by respectively Dublin City University, University College London, University of Bremen, Queen’s University Belfast published here: Emotion recognition in humans and machine using posed and spontaneous facial expression (you can also download the complete research in pdf format). The “Emotional Tracking (SDK v1.0) was developed by the company MorphCast founded in 2013. Emotional Tracking SDK has the distinction of being a javascript engine less than 1MB and works directly on mobile browsers (i.e, without remote server and API processing).” (PDF downloadable version)

–

–

–

Capabilities – Type of data extracted

MorphCast SDK has a modular architecture which allows you to load only what you need. Here a quick description of the modules available:

- FACE DETECTOR

- POSE

- AGE

- GENDER

- EMOTIONS

- AROUSAL VALENCE

- ATTENTION

- WISH

- POSITIVITY

- ALARMS

- OTHER FEATURES

FACE DETECTOR

It detects the presence of a face in the field of view of the webcam, or in the input image.

The tracked face is passed to the other modules and then analyzed. In case of multiple faces, the total number of faces is available but, then, only the main face can be analyzed.

POSE

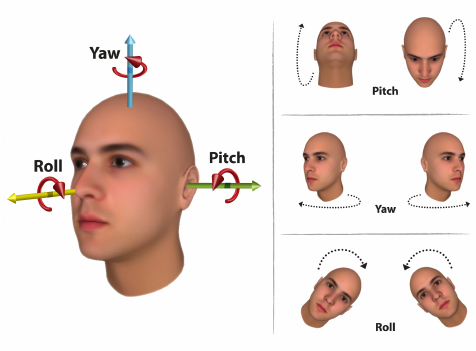

It estimates the head-pose rotation angles expressed in radians as pitch, roll and yaw.

AGE

It estimates the likely age of the main face with a granularity of years, or within an age group for better numerical stability.

GENDER

It estimates the most likely gender of the main face, Male or Female.

EMOTIONS

It estimates the presence and the respective intensities of facial expressions in the format of seven core emotions – anger, disgust, fear, happiness, sadness, and surprise, plus the neutral expression – according to the Ekman discrete model.

AROUSAL VALENCE

It estimates the emotional arousal and valence intensity. According to the dimensional model of Russell. Arousal is the degree of engagement (positive arousal), or disengagement (negative arousal); valence is the degree of pleasantness (positive valence), or unpleasantness (negative valence).

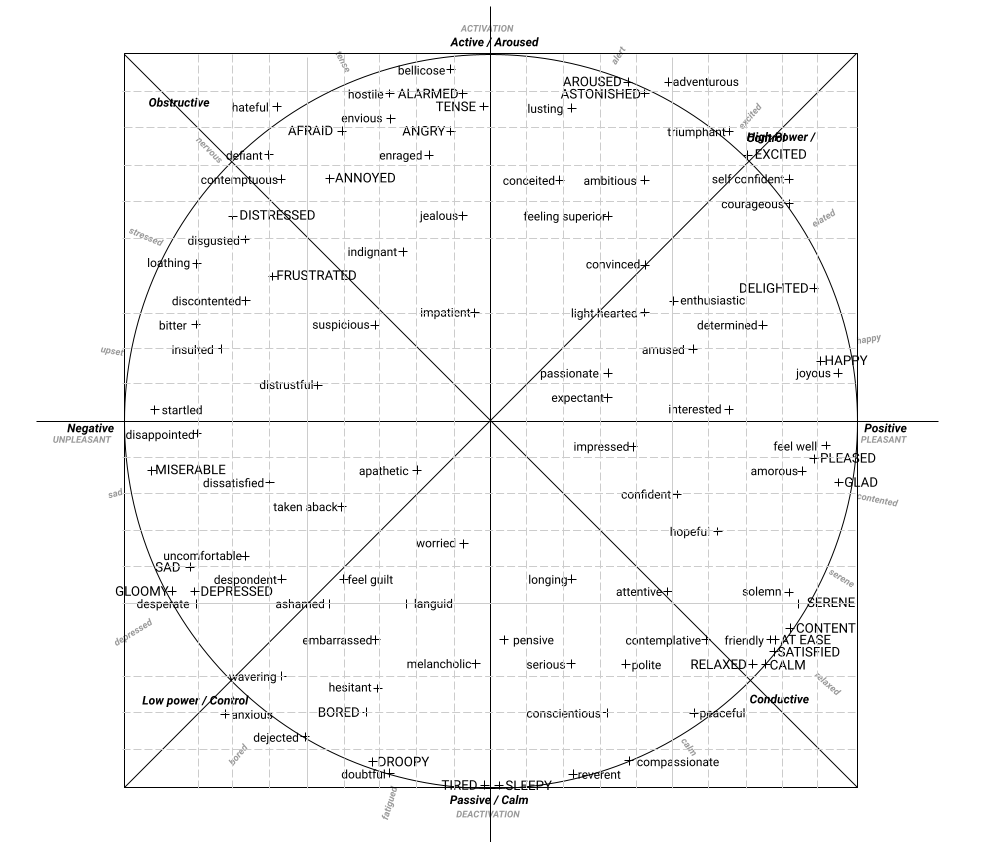

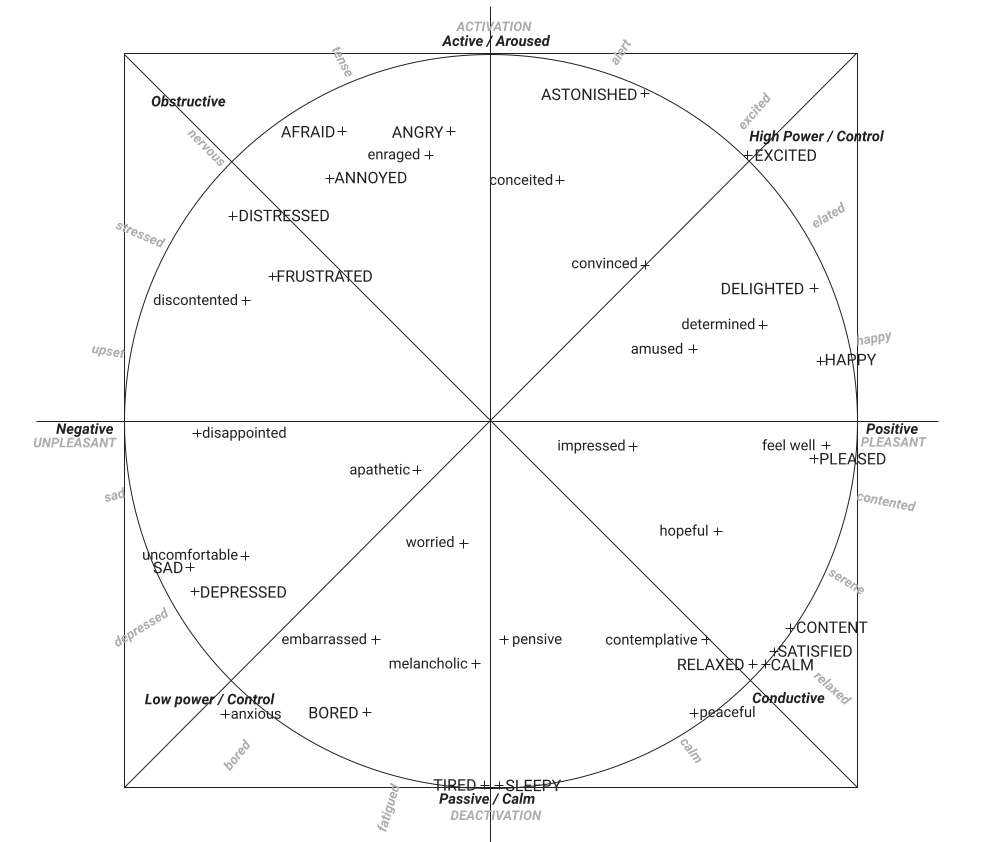

It outputs an object containing the smoothed probabilities in the range 0.00 – 1.00 of the 98 emotional affects, computed from the points in the 2D (valence, arousal) emotional space, according to the mappings of Scherer and Ahn:

98 circumplex model of affects words

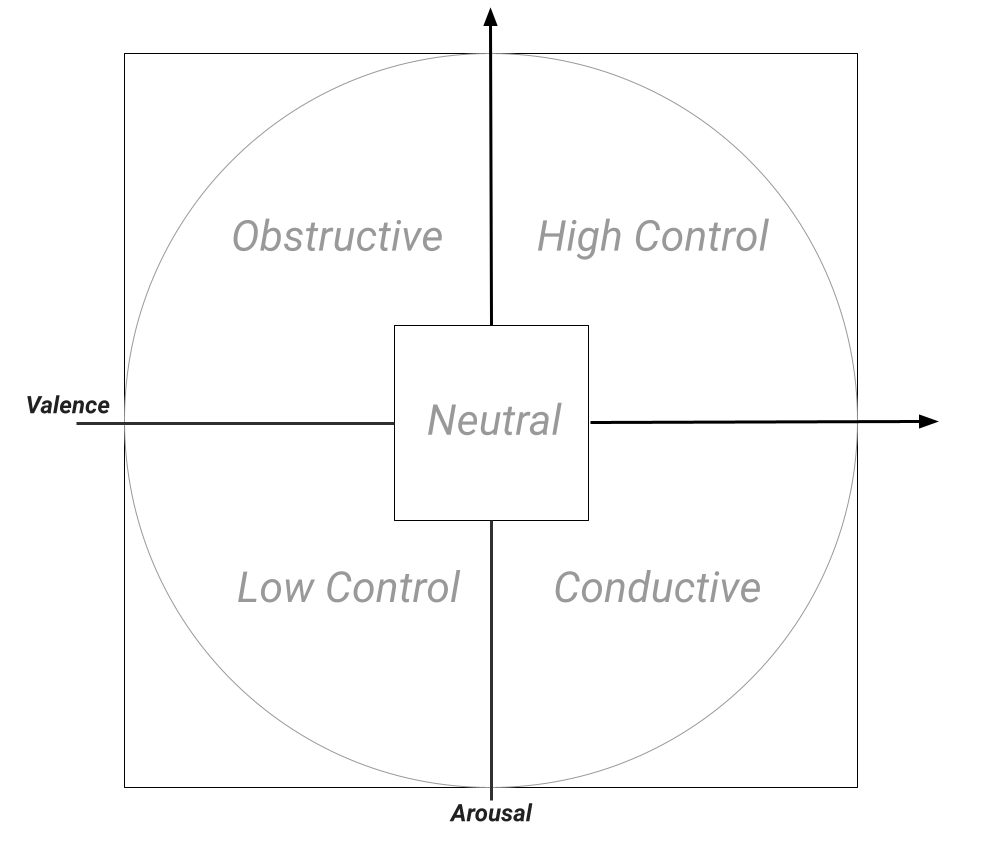

The 98 emotional affects output 2D Quadrant

The simplified 38 emotional affects output can be useful in several cases

ATTENTION

It estimates the attention level of the user to the screen, considering whether the user’s face is in or out of the field of view of the webcam, head position and other emotional and mood behavior. The speed of attention fall and rise can be properly tuned in an independent manner according to the use case.

The attention module output should be understood as an holistic face attention signal, and is not an eye-tracking solution. Today, attention combines two main components:

- Face orientation / camera alignment, primarily based on head-pose yaw. This helps estimate whether the user is facing the camera/screen or turning away.

- Disengagement component from arousal, where strongly negative arousal can reduce the attention score, helping distinguish a visually present but low-engagement state.

WISH

It estimates the value of the MorphCast® Face Wish index. This is a proprietary metric that, considering the interest and sentiment of a customer, summarizes in a holistic manner his/her experience about a particular content or product presented on the screen. This output is very sensible to the variation of expressions, giving him a value in estimate a change of sentiment looking a product or a scene in a video.

POSITIVITY

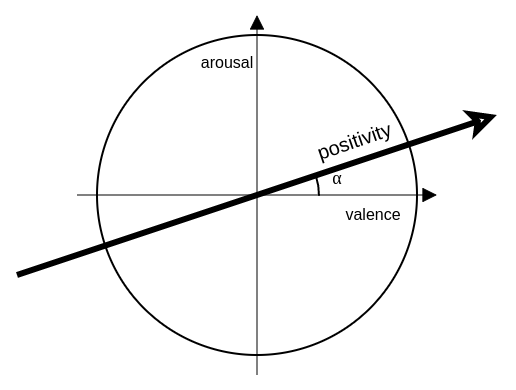

It gauges the intensity of arousal and valence based on the 17-degree angle of the circumplex model of affect (Russell). This exclusive metric provides a comprehensive overview of an individual’s positivity, capturing facial expressions.

This output can be used for multiple types of detection by modifying the alpha angle on the whole model.

ALARMS

Several alarm are outputting by the AI engine to help developer to trigger reactions at possible cheating situations (NO FACE, MORE FACES, LOW ATTENTION…)

OTHER FEATURES

It estimates the presence of the following face features:

| Arched Eyebrows | Double Chin | Narrow Eyes |

| Attractive | Earrings | Necklace |

| Bags Under Eyes | Eyebrows Bushy | Necktie |

| Bald | Eyeglasses | No Beard |

| Bangs | Goatee | Oval Face |

| Beard 5 O’Clock Shadow | Gray Hair | Pale Skin |

| Big Lips | Hat | Pointy Nose |

| Big Nose | Heavy Makeup | Receding Hairline |

| Black Hair | High Cheekbones | Rosy Cheeks |

| Blond Hair | Lipstick | Sideburns |

| Brown Hair | Mouth Slightly Open | Straight Hair |

| Chubby | Mustache | Wavy Hair |

Bibliography

- Russell, J., Lewicka, M. & Niit, T. (1989). A cross-cultural study of a circumplex model of affect. Journal of personality and social psychology, 57, 848–856.

- Scherer, Klaus. (2005). Scherer KR. What are emotions? And how can they be measured? Soc Sci Inf 44: 695-729. Social Science Information. 44. 695-792.

- Ahn, Junghyun & Gobron, Stéphane & Silvestre, Quentin & Thalmann, Daniel. (2010). Asymmetrical Facial Expressions based on an Advanced Interpretation of Two-dimensional Russell Emotional Model.

- Paltoglou, G., & Thelwall, M. (2012). Seeing stars of valence and arousal in blog posts. IEEE Transactions on Affective Computing, 4(1), 116-123.

- Paul Ekman Basic-Emotions

Constant commitment to conscious and ethical use of Emotion AI

Morphcast Inc. is constantly committed to providing all the information needed for better understanding and use of Emotion AI technologies. Check our guidelines and policies for a responsible use of Emotion AI. Morphcast Inc. has also adopted a code of ethics which you can find here.

Frequently Asked Questions

We also invite you to consult these frequently asked questions, which we continuously update, with details on the most common requests for clarification from our clients on the topics covered in this document.

Contacts

If you experience any damage or abuse using our platform, please inform us using this form on our website.

For any clarifications and/or problems related to the operation of the Software with respect to privacy, please contact privacy@morphcast.com.