Artificial intelligence (AI) has the potential to revolutionize the world, but it can also be used unethically. There are several potential negative consequences of unethical AI use. They include biases and discrimination, violations of privacy and human rights, and unintended harm. Let’s discover why it is crucial to focus on ethical AI use!

Main Ethical AI Concern: Bias and Discrimination

One of the major concerns with AI is bias and discrimination. AI systems are only as unbiased as the data they are trained on. And if the data contains inherent biases, these biases will be reflected in the AI system’s decisions. For example, an AI system used by a hiring company may discriminate against certain job candidates based on their gender, race, or ethnicity simply because the data used to train the system contained biased patterns. This can perpetuate existing societal inequalities and harm marginalized groups.

Unethical AI examples in this context include Amazon’s gender-biased recruiting algorithm, which was found to prefer male candidates over female ones. Such cases illustrate how AI, when not developed and monitored ethically, can reinforce discrimination rather than mitigate it.

Violation of Privacy and Human Rights

Another concern is the violation of privacy and human rights. AI can be used to gather and process vast amounts of personal data, often without individuals’ consent or knowledge. This can lead to privacy violations and the misuse of sensitive information. For example, governments and law enforcement agencies can use facial recognition technology to identify and track individuals without their consent, infringing on individuals’ right to privacy and freedom of movement.

Real-world examples of the unethical use of AI in this context include the deployment of facial recognition technology in public spaces. This has been shown to be less accurate for people with darker skin tones, leading to disproportionate targeting of certain groups.

Another Potential Problem: Unintended Harm

Unintended harm is also a potential consequence of unethical AI use. This can occur when AI systems are not designed or tested properly. And it would lead to unintended consequences that can harm individuals or society as a whole. For example, an AI system used in a medical setting that makes incorrect diagnoses or treatment recommendations can harm patients and put their lives at risk.

Such examples of unethical AI highlight the importance of rigorous testing and the need for ethical oversight in AI development, particularly in high-stakes areas like healthcare.

Preventing Unethical AI Use: Ethical AI to Prevent Negative Outcomes

To prevent these negative outcomes, AI developers and users must prioritize ethical considerations throughout the entire AI life cycle. This means, from design and development to deployment and use. This includes:

- ensuring that the data used to train AI systems is diverse and representative,

- testing AI systems for bias and discrimination,

- and respecting individuals’ privacy and human rights.

AI ethics are crucial in ensuring that AI technology is used in a responsible and ethical way. By involving human judgment in decision-making processes, particularly in critical areas such as medical diagnoses or criminal justice, we can prevent AI from making decisions that may be biased or harmful. This human oversight is essential to ensure that AI is used in a way that aligns with ethical standards and societal values.

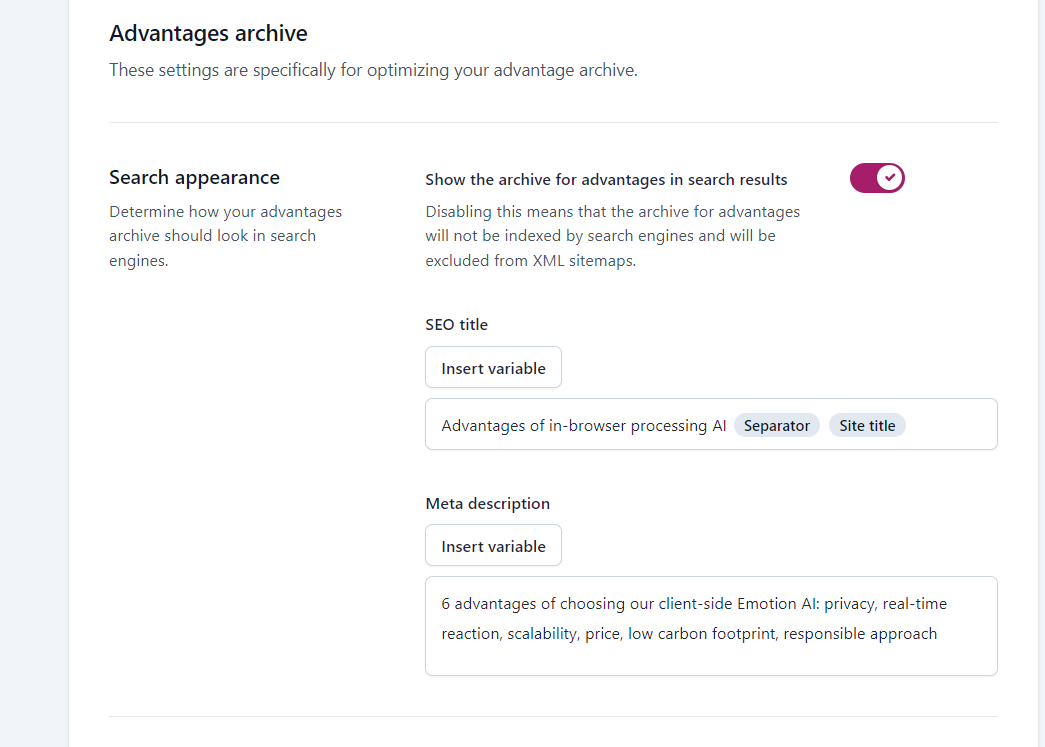

Ethical AI in Practice: MorphCast

An example of a company prioritizing ethical AI is MorphCast, an Emotion AI provider. MorphCast’s client-side processing AI engine architecture allows the company to maintain control over the use of its Emotion AI. And ensures it aligns with the company’s ethical code and guidelines. This level of control helps prevent the negative consequences of unethical AI use, such as biases, discrimination, violations of privacy, and unintended harm.

To Sum Up!

Now it is more important than ever for AI developers and providers to prioritize ethical considerations in their work. By doing so, we can unlock the full potential of AI while minimizing the risks and negative consequences associated with its use. Is AI unethical? It doesn’t have to be. But it requires a conscious effort from all stakeholders involved to ensure it is used ethically.