Ethical AI is a term that’s been used to describe the use of artificial intelligence (AI) in ways that are beneficial to humans. It can include applications such as:

- Using AI to make decisions about employee hiring, firing or promotion based on factors like race or gender. This is illegal under federal law because it discriminates against certain groups of people.

- Using facial recognition technology to identify customers at stores and restaurants without their permission. This could violate privacy laws if companies don’t get consent from their customers first before collecting their personal data through cameras installed around their businesses’ premises.

Transparency Around Data Collection and Use

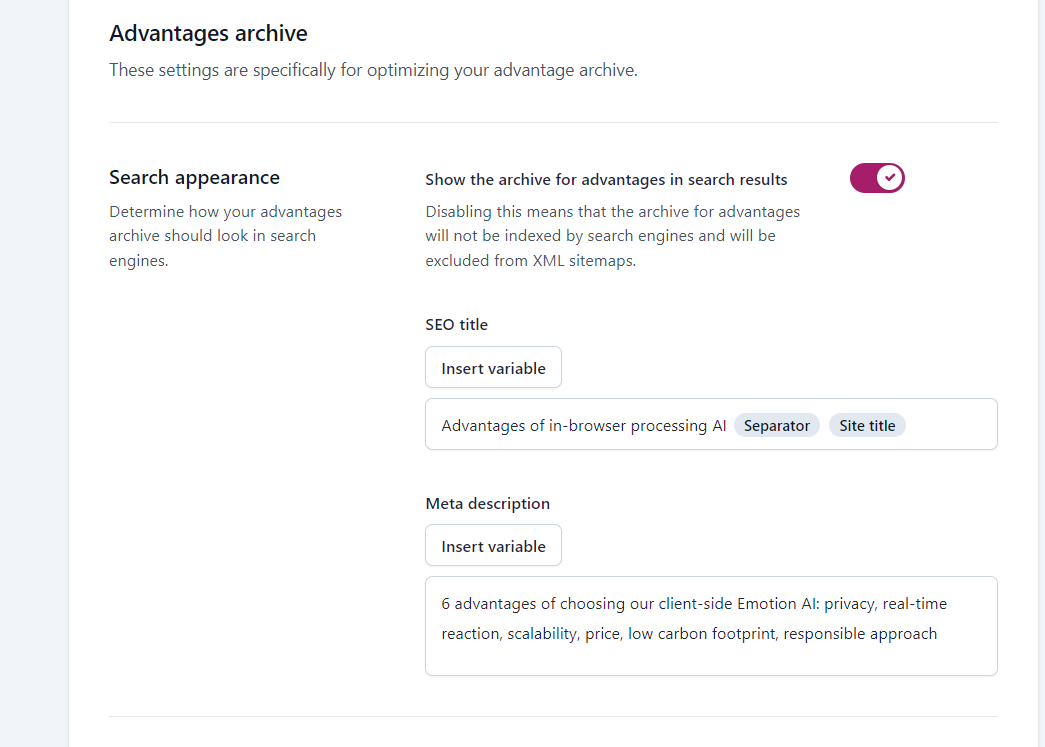

Transparency around data collection and use is crucial to building trust in AI. This includes explaining what data is being collected, why it’s being collected, and how it will be used.

In addition to transparency around how AI works on an individual level (for example: “This product uses facial recognition technology”), companies should also be transparent about their broader approach to ethical AI use, the policies they have in place for protecting user privacy and ensuring that users have control over their own data.

Explaining How AI is Used in a Particular Product or Service

The first step to building trust in AI is to explain how it works and why you’re using it. You should be able to provide an overview of the system, including:

- What data is being collected?

- How is that data being used?

- Who has access to the information (internal team members or third parties)?

Showcasing Real-World Examples of Ethical AI Use

You can also showcase examples of successful AI projects that have been implemented ethically. This will help your customers understand what is possible with ethical AI and how it can benefit them.

Building Trust in AI Through Communication

Building trust in AI is a two-way street. You need to be open and honest about how you are using it, and address customer concerns head on. As with any other product or service, customers will have questions about how their data is being used. And they expect companies to be proactive in responding to those questions.

If you’re not already doing so, consider creating an FAQ page that addresses common concerns around data privacy and security as well as how your company uses AI. Consider including links where customers can learn more about specific technologies. For example: “Here’s a link where you can read more about machine learning”.

The Role of Regulation in Building Trust in AI

Regulation is an important part of building trust in AI. It’s one thing to say you’re using ethical AI. But it’s another thing entirely to be able to prove that you are. One way that companies can do this is by being transparent about how they use data and sharing their policies with consumers.

This may seem like common sense, but there are some companies who don’t make their policies public. And others whose policies aren’t always up-to-date. This can lead consumers to question whether or not they should trust a company’s claims about its use of AI technology at all! For example: In July 2019, Facebook announced plans for new privacy updates related specifically around facial recognition technology (more on this later). However, these changes won’t go into effect until 2020. This means users will still have no idea what kind of information could potentially be shared about them until after it has already happened!

Building Trust in AI: Best Practices for Companies

To build trust in AI, companies should:

- Hire diverse teams. Diverse teams are more likely to consider the ethical implications of their work. And make decisions that benefit all members of society.

- Establish ethical guidelines for AI systems and make them publically available so that everyone knows what’s expected from them. This will help prevent companies from making mistakes like Google’s Duplex demo during its launch event last year when it was revealed that one of the calls was made without disclosing who was on the other end of the line (the person speaking).

- Test and audit AI systems regularly so that any problems can be identified before they cause harm or embarrassment and then fix them!

Hiring Diverse Teams

A diverse team is one that is composed of people with different backgrounds, experiences and perspectives. A diverse team can help you avoid bias in your AI systems.

For example, if you’re building an AI system that helps doctors diagnose diseases or predict patient outcomes, it’s important to make sure that the data used to train your model represents a wide range of patients from different ethnicities, socioeconomic statuses and ages. If not, then you could end up with an algorithm that has inherent biases against certain groups of people. And this could lead to unfair treatment for those individuals when they use the product or service provided by your company!

Establishing Ethical Guidelines

As you develop your AI ethics policy, it’s important to consider the following questions:

- What is the purpose of your AI? How will it be used?

- Who are its users and how do they access it? Are there any restrictions on who can use the product or service (for example, age requirements)?

- How does this product/service fit into current legislation around privacy and data protection?

Testing and Auditing AI Systems

These are critical points to ensuring that the technology is functioning as intended, and that it doesn’t have any biases or flaws.

Testing: Testing can be done in a variety of ways, including:

- Human-in-the-loop testing where a human is involved in all stages of the process

- Automated testing where an automated program tests for errors and bugs

Auditing: Auditing involves checking an AI system’s output against known standards or goals. This may include comparing results with other data sets to ensure accuracy, or looking at how well an algorithm performed compared with its original purpose (e.g., did it produce accurate predictions?).

Conclusion

In the end, it’s important for companies to create an ethical AI system that is transparent and trustworthy. This will help build trust with their customers and ensure they continue to use the product or service.

Companies can also take steps to make sure they are following best practices when it comes to building ethical AI systems by:

- Creating a clear, concise privacy policy that outlines exactly how data will be collected, used and shared by your company or organization;

- Ensuring that all employees have access to this policy so they know what information is being collected about customers;

- Being honest about what data you’re collecting from customers so there aren’t any surprises later on down the line.